Special Issue: Biomedical Imaging and Analysis in the Age of Big Data and Deep Learning

Volume 108, Issue 1

January 2020

Guest Editors

Special Issue Papers

By J. S. Duncan, M. F. Insana, and N. Ayache

By R. J. G. van Sloun, R. Cohen, and Y. C. Eldar

This article provides an overview of use of deep, data-driven learning strategies in ultrasound systems, from the front-end to advanced applications. The authors discuss the use of these new computational approaches in all aspects of ultrasound imaging, ranging from ideas that are at the interface of raw signal acquisition (including adaptive beam forming) and image formation, to learning compressive codes for color Doppler acquisition to learning strategies for performing clutter suppression.

By K. de Haan, Y. Rivenson, Y. Wu, and A. Ozcan

This article provides an overview of efforts to advance the field of computational microscopy and optical sensing systems for microscopy using deep neural networks. First, the work overviews the basics of inverse problems in optical microscopy and then outlines how deep learning can be a framework for solving these problems, typically through supervised methods. Then, there is a discussion of use of deep learning to try to obtain single-image super resolution and image enhancement in these data sets.

By K. Gong, E. Berg, S. R. Cherry, and J. Qi

This article discusses applications of machine learning to PET, PET-CT, and PET-MRI multimodal imaging. The authors describe the impact of machine learning at both the detector stage and for quantitative image reconstruction. Also discussed are ideas about how a broad array of statistical methods and neural network applications are improving performance of attenuation and scatter correction algorithms, as well as integrating patient priors into reconstructions based on a constrained maximum-likelihood estimator.

By J. I. Hamilton and N. Seiberlich

This article provides an overview of current research that combines MRF and machine learning, as well as presents original research demonstrating how machine learning can speed up dictionary generation for cardiac MRF.

By S. Ravishankar, J. C. Ye, and J. A. Fessler

This article overviews how sparsity, data-driven methods and machine learning have, and will continue to, influence the general area of image reconstruction, cutting across modalities. In general, this contribution looks at progress in medical image reconstruction methods with focus on the two most recent trends: methods based on sparsity or low-rank models, and data-driven methods based on machine learning techniques.

By D. Rueckert and J. A. Schnabel

This article provides a historical overview as to how the medical image analysis and computing field has developed, starting with model-based approaches and then evolving to today’s current emphasis on data-driven/deep-learning-based efforts. These concepts are compared, and advantages and disadvantages of each style of work are noted. The authors note that while data-driven, deep-learning approaches often can outperform the more traditional-model-based ideas, the notion of using these techniques in clinical scenarios has led to a number of challenges that are discussed.

By L. Shen and P. M. Thompson

This article describes applications of novel and traditional data-science methods to the study of brain imaging genomics. There is a discussion as to how researchers combine diverse types of high-volume data sets, which include multimodal and longitudinal neuroimaging data and high-throughput genomic data with clinical information and patient history, to develop a phenotypic and environmental basis for predicting human brain function and behavior.

By H. M. Whitney, H. Li, Y. Ji, P. Liu, and M. L. Giger

This article reviews progress in using convolutional neural network (CNN)-based transfer learning to characterize breast tumors through various diagnostic, prognostic, or predictive image-based signatures across multiple imaging modalities including mammography, ultrasound, and magnetic resonance imaging (MRI), compared to both human-engineered feature-based radiomics and fusion classifiers created through combination of the features from both domains.

By X. Jia, X. Xing, Y. Yuan, L. Xing, and M. Q.-H. Meng

This article overviews and integrates notions of image acquisition and image analysis for use in cancer screening through wireless capsule endoscopy (WCE). Here, machine and deep learning approaches are being developed to assist in automated polyp recognition/detection and analysis that will enhance diagnostic accuracy and efficiency of this procedure that is a critical tool for use in the clinic.

By T. Vercauteren, M. Unberath, N. Padoy, and N. Navab

This article overviews ideas as to how to incorporate the range of prior knowledge and instantaneous sensory information from experts, sensors and actuators for use in computer-assisted interventions, as well as learning how to develop a representation of the surgery or intervention among a mixed human-AI team of actors. In addition, the design of interventional systems and associated cognitive shared control schemes for online uncertainty awareness when making decisions in the OR or the IR suite is discussed, and it is noted how this is critical for producing precise and reliable interventions.

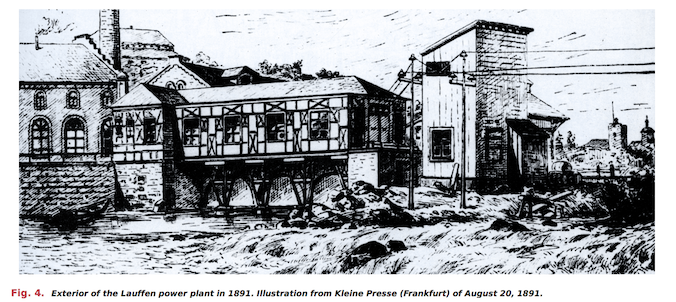

Scanning Our Past

By A. Allerhand

2 Comments

Comments are closed.